The Pentagon is going to make one cloud vendor exceedingly happy when it chooses the winner of the $10 billion, ten-year enterprise cloud project dubbed the Joint Enterprise Defense Infrastructure (or JEDI for short). The contract is designed to establish the cloud technology strategy for the military over the next 10 years as it begins to take advantage of current trends like Internet of Things, artificial intelligence and big data.

Ten billion dollars spread out over ten years may not entirely alter a market that’s expected to reach $100 billion a year very soon, but it is substantial enough give a lesser vendor much greater visibility, and possibly deeper entree into other government and private sector business. The cloud companies certainly recognize that.

Photo: Glowimages/Getty Images

That could explain why they are tripping over themselves to change the contract dynamics, insisting, maybe rightly, that a multi-vendor approach would make more sense.

One look at the Request for Proposal (RFP) itself, which has dozens of documents outlining various criteria from security to training to the specification of the single award itself, shows the sheer complexity of this proposal. At the heart of it is a package of classified and unclassified infrastructure, platform and support services with other components around portability. Each of the main cloud vendors we’ll explore here offers these services. They are not unusual in themselves, but they do each bring a different set of skills and experiences to bear on a project like this.

It’s worth noting that it’s not just interested in technical chops, the DOD is also looking closely at pricing and has explicitly asked for specific discounts that would be applied to each component. The RFP process closes on October 12th and the winner is expected to be chosen next April.

Amazon

What can you say about Amazon? They are by far the dominant cloud infrastructure vendor. They have the advantage of having scored a large government contract in the past when they built the CIA’s private cloud in 2013, earning $600 million for their troubles. It offers GovCloud, which is the product that came out of this project designed to host sensitive data.

Jeff Bezos, Chairman and founder of Amazon.com. Photo: Drew Angerer/Getty Images

Many of the other vendors worry that gives them a leg up on this deal. While five years is a long time, especially in technology terms, if anything, Amazon has tightened control of the market. Heck, most of the other players were just beginning to establish their cloud business in 2013. Amazon, which launched in 2006, has maturity the others lack and they are still innovating, introducing dozens of new features every year. That makes them difficult to compete with, but even the biggest player can be taken down with the right game plan.

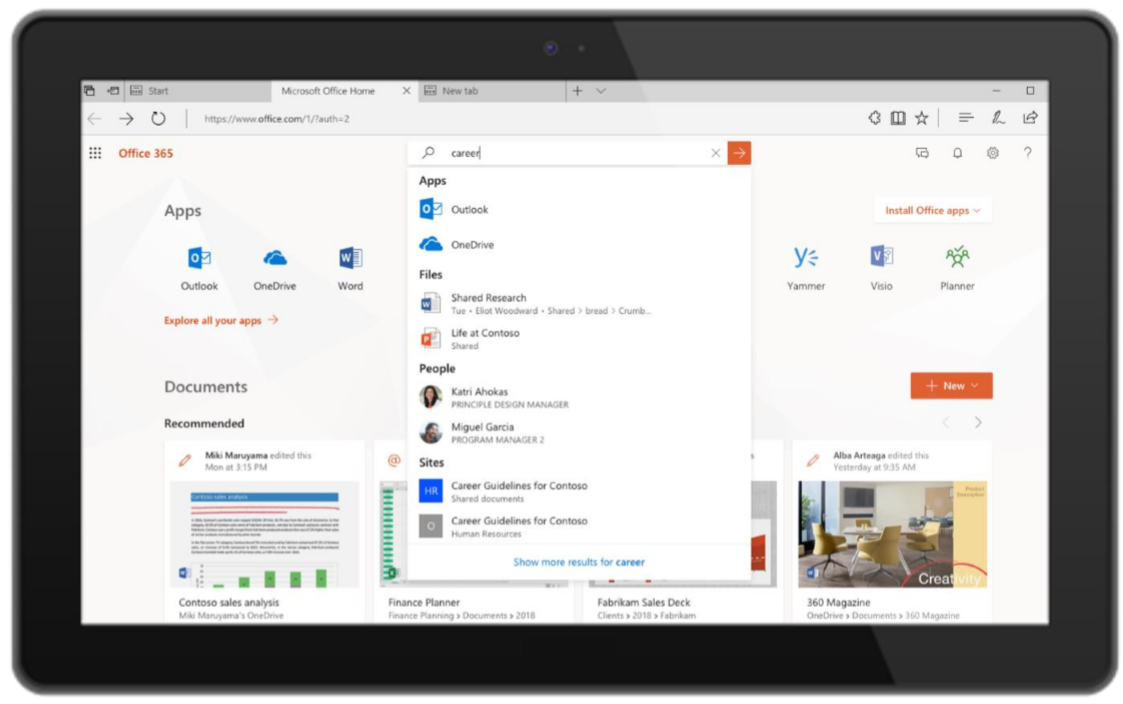

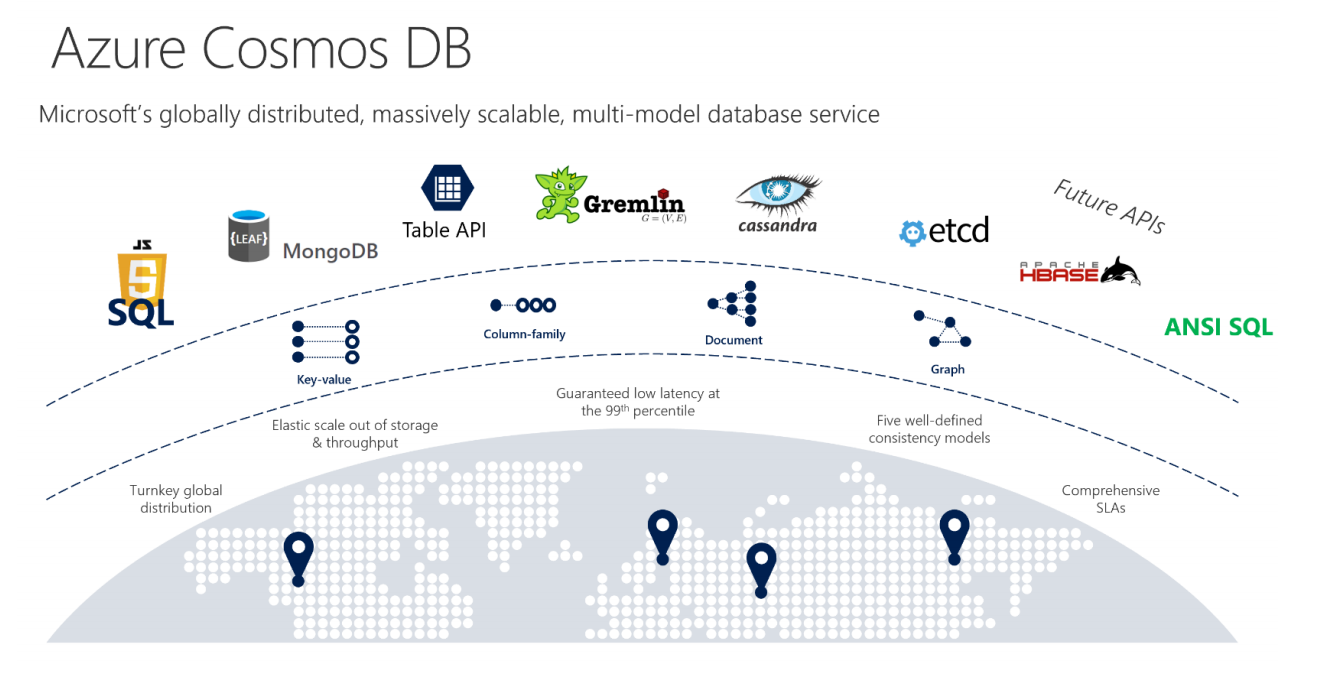

Microsoft

If anyone can take Amazon on, it’s Microsoft. While they were somewhat late the cloud they have more than made up for it over the last several years. They are growing fast, yet are still far behind Amazon in terms of pure market share. Still, they have a lot to offer the Pentagon including a combination of Azure, their cloud platform and Office 365, the popular business suite that includes Word, PowerPoint, Excel and Outlook email. What’s more they have a fat contract with the DOD for $900 million, signed in 2016 for Windows and related hardware.

Microsoft CEO, Satya Nadella Photo: David Paul Morris/Bloomberg via Getty Images

Azure Stack is particularly well suited to a military scenario. It’s a private cloud you can stand up and have a mini private version of the Azure public cloud. It’s fully compatible with Azure’s public cloud in terms of APIs and tools. The company also has Azure Government Cloud, which is certified for use by many of the U.S. government’s branches, including DOD Level 5. Microsoft brings a lot of experience working inside large enterprises and government clients over the years, meaning it knows how to manage a large contract like this.

When we talk about the cloud, we tend to think of the Big Three. The third member of that group is Google. They have been working hard to establish their enterprise cloud business since 2015 when they brought in Diane Greene to reorganize the cloud unit and give them some enterprise cred. They still have a relatively small share of the market, but they are taking the long view, knowing that there is plenty of market left to conquer.

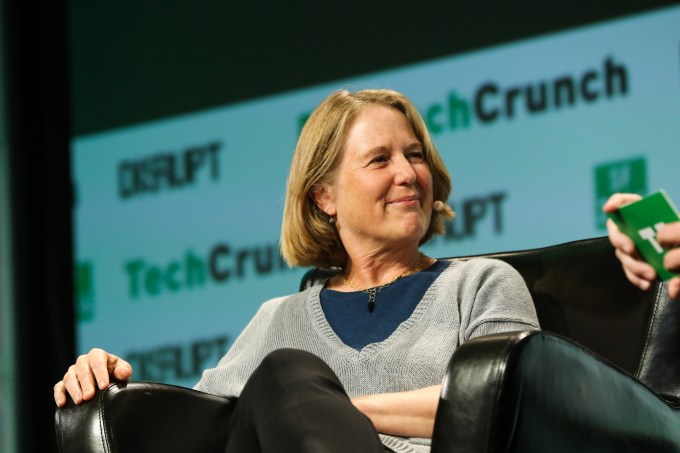

Head of Google Cloud, Diane Greene Photo: TechCrunch

They have taken an approach of open sourcing a lot of the tools they used in-house, then offering cloud versions of those same services, arguing that who knows better how to manage large-scale operations than they do. They have a point, and that could play well in a bid for this contract, but they also stepped away from an artificial intelligence contract with DOD called Project Maven when a group of their employees objected. It’s not clear if that would be held against them or not in the bidding process here.

IBM

IBM has been using its checkbook to build a broad platform of cloud services since 2013 when it bought Softlayer to give it infrastructure services, while adding software and development tools over the years, and emphasizing AI, big data, security, blockchain and other services. All the while, it has been trying to take full advantage of their artificial intelligence engine, Watson.

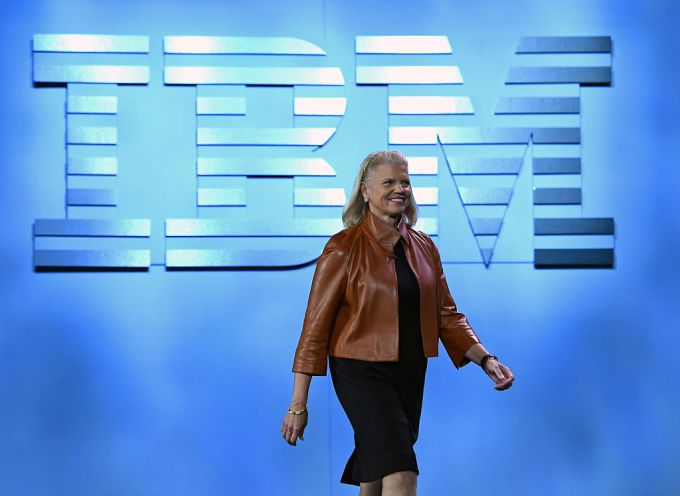

IBM Chairman, President and CEO Ginni Romett Photo: Ethan Miller/Getty Images

As one of the primary technology brands of the 20th century, the company has vast experience working with contracts of this scope and with large enterprise clients and governments. It’s not clear if this translates to its more recently developed cloud services, or if it has the cloud maturity of the others, especially Microsoft and Amazon. In that light, it would have its work cut out for it to win a contract like this.

Oracle

Oracle has been complaining since last spring to anyone who will listen, including reportedly the president, that the JEDI RFP is unfairly written to favor Amazon, a charge that DOD firmly denies. They have even filed a formal protest against the process itself.

That could be a smoke screen because the company was late to the cloud, took years to take it seriously as a concept, and barely registers today in terms of market share. What it does bring to the table is broad enterprise experience over decades and one of the most popular enterprise databases in the last 40 years.

Larry Ellison, chairman of Oracle. Photo: David Paul Morris/Bloomberg via Getty Images

It recently began offering a self-repairing database in the cloud that could prove attractive to DOD, but whether its other offerings are enough to help it win this contract remains to be to be seen.